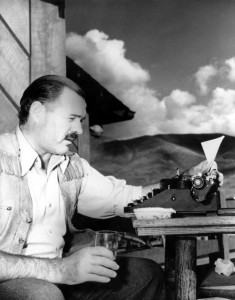

The most essential gift for a good writer is a built-in, shock-proof, shit detector – Ernest Hemingway

And as a bonus, this one calls itself an “app”, so it sounds like an advertising exec trying to sound hip: Hemingway App.

Like other sites of its ilk, it works best with writing samples provided by people who are nervous about their own competence. When one tries to validate it with the sort of prose that is generally thought to be the sort of thing one should aim for, it doesn’t work so well. For whatever reason, Hemingway is always comes out looking badly things, but it is particularly ironic in this case, I think. Personally, if I thought Hemingway was awesome and that I’d cracked the code on how he wrote so well, I’d test said magic algorithm on some of his writing before releasing it into the wild. It’s not like it is hard to come by.

The obvious question, of course, is why does the test fail? (I think we can take it as read that a test designed to make you write like Hemingway has failed when it insists that Hemingway isn’t up to snuff). Is it because it can’t get out of its own way, and properly implement its own strictures? Whoever put it together, for example, doesn’t understand what passive voice is, and while they call various things adverbs that aren’t, they might be even worse at spotting actual adverbs.

Or is it, perhaps, because the rules themselves aren’t quite right? There is a clear danger in using Hemingway for something like this – most such tests pick EB White as their exemplar, but Hemingway wasn’t quite as adamant about telling everyone not to write like he did, at least not to the point of publishing a whole book about it. For White, the algorithm designer can always say that it was designed to test against this book over here, not that other one; for Hemingway, sadly, we just have the stuff where he was trying to write well. Most of his advice on writing does not lend itself so well t a formula. And if Hemingway can get away with adverbs and passive clauses and complicated sentences, how are we supposed to know what to avoid? Read his tips? Those are a bit harder to follow.